Tracking Parity

HHVM has a large suite of unit tests that must pass in several build configurations before a commit reaches master. Unfortunately, this test suite passing doesn’t tell you if HHVM can be used for anything useful - so we periodically run the test suites for popular, open source frameworks.

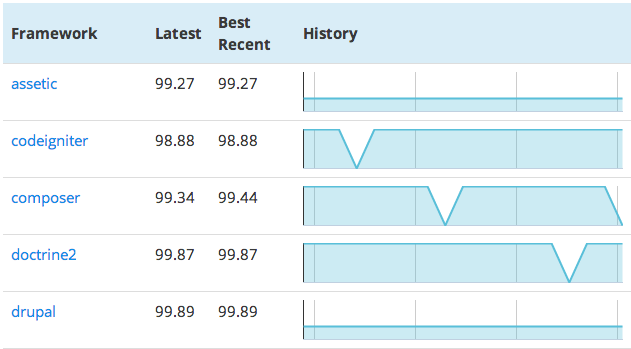

The frameworks test page is now public, as is the JSON data backing it (which you’re welcome to use).

What’s tested

We aim to test the latest stable version of each framework, with a few exceptions:

-

if fixes are required, we use the earliest version of

masterordevelopthat includes them -

if recent changes make it significantly easier to run the tests - e.g., a framework switching from a custom test runner to just ‘

run phpunit’

At the moment, the framework versions in our test runner are fairly consistent with our above philosophy, but we will continue to fine tune them as needed. You can see the exact versions we use in hphp/test/frameworks/frameworks.yaml.

How it works

The test runner and configuration is in hphp/test/frameworks/; once an hour, a script fetches the latest version of HHVM from GitHub, does a clean debug build, and runs the framework tests in --csv mode (with JIT, not RepoAuthoritative) . This data is then imported into a fairly standard MySQL+Memcached system, and made available via a JSON API. The results page is built on top of that API using HHVM, XHP, and the Google Charts API.

The runner runs all tests in parallel - including tests within a framework; this is needed for the tests to complete in a reasonable amount of time, but unfortunately leads to noisy data, as some tests have implicit interdependencies.

Motivation

We believe the current frameworks in the test runner are a nice representative sample of real world PHP (and we are adding more). We showed a static snapshot of our test parity to these frameworks late last year. As HHVM is continually developed, it is paramount that the community knows whether there is progression or regression in how HHVM can run code. Having a live snapshot of unit test pass percentage progress helps “keep us honest”.

Also, HHVM has a growing developer community, including passionate OpenAcademy students; snapshots of this data have been invaluable for finding and prioritizing issues (combined with data from GitHub and VersionEye). As such, moving from occasional framework unit test snapshots to continuous data was an obvious step in making it easy for new contributors to find effective ways to contribute.

Comments

- T.J. L: The fact that the graphs don't have a common "floor" (and that the bottom of the Y axis is simply the recent min) makes the results look a lot more volatile than they are, and makes it hard to "compare."

- Fred Emmott: It makes it hard to directly compare one framework to another, but we found it made it easier to see regressions/improvements within a framework, which was more important to us.

- Nano: I love to see PhalconPHP working with HHVM.

- Martin: I'd love so see also the percent of the "skipped" tests.

- Fred Emmott: C extension compatibility is something that's being worked on, but is currently lower priority than PHP frameworks. Additionally, I'd expect PhalconPHP to be less performant than pure-PHP frameworks under HHVM - pure PHP ends up as highly optimized x64 machine code, and the overhead of calling a C/C++ function (pushing stuff onto the stack instead of just using registers, etc) is likely to be more than any benefits of the C code; it's also likely that the JIT could make more efficient code from PHP than GCC can from C, as we might be able to optimize-away type checks/conversions, depending on usage.

- Nano: Well, maybe in terms of speed PhalconPHP dont get much benefit from hhvm, but is clearly the most competitive framework atm. Absolutly all my projects (PHP) are build with PhalconPHP, so, hope in a future be available. http://www.techempower.com/benchmarks/previews/round9/#section=data-r9&hw=peak&test=json&l=sg Anyway, thanks for you work.

- Sumbobyboys: This page is really cool but seems to be broken right now ! ("test-charts.js" missing) Thanks for all your hard work !