We are the 98.5% (and the 16%)

On November 4th, the HHVM team went on a 3-week performance and parity lockdown. The lockdown officially ended on November 22nd. Overall, this lockdown was a qualified success. Success was measured on 3 vectors:

-

Did we meet our 99% average unit test pass percentage goal for the 21 open-source frameworks we tested against?

-

Did we meet the 15% across the board performance improvement gains?

-

Were the razors put away for the entirety of the three weeks?

Parity

Going into lockdown, the team knew that awesome performance alone would not suffice in making HHVM a viable PHP runtime to be used out in the wild. It actually had to run real, existing PHP code reliably. So we made that a first class work item (as it will continue to be into 2014). Our first step into improving PHP parity was to look at Github, see which PHP projects were being used by many people (via their “star” rating) and make sure their unit tests would run successfully on HHVM.

Parity Results

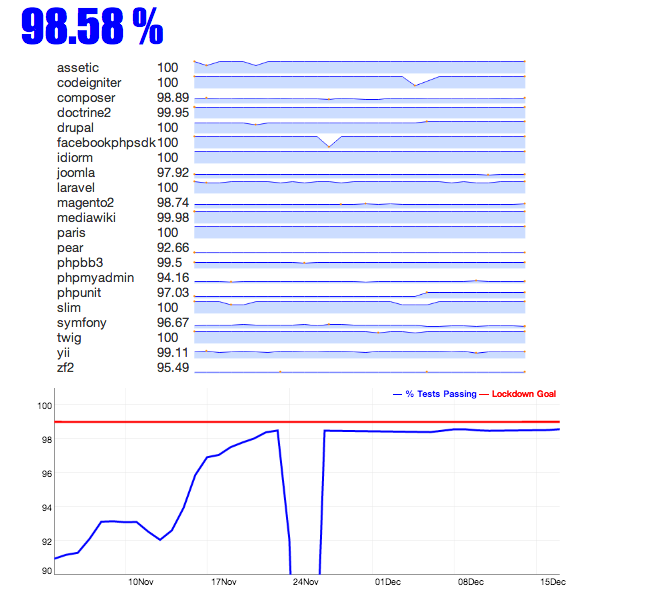

Let’s just get to the numbers. After over 125 diffs, here are the results of the parity portion of our 3-week lockdown.

Recall the table of parity before the lockdown. Now, here is our graph of improvement after the lockdown:

As you probably can see by the graph, we were successful in our efforts to improve unit test parity nearly across the board:

-

The team doubled the number of open source projects with unit tests that pass at 100% on HHVM (from 4 to 8). And we have 4 more that are very close in the 99%+ range.

-

We had > 10% gains in Assetic, Symfony, Yii and CodeIngiter (I am not counting the Pear gain here since we did not have a nice baseline number to begin with).

-

And all 21 frameworks are above 90% in overall unit test pass percentage.

Many of the diffs and updates that enabled our unit test parity increase are part of the HHVM 2.3 package. Some key improvements that had significant impact on our unit test results were:

-

Make array not an instanceof

Traversable -

Internationalizationsupport -

PDO::sqliteCreateFunction()implementation -

The beginning of real

php.inisupport

However, there are a few curiosities…

-

Why did PhpMyAdmin regress? We are not convinced this is a regression in the “we are failing more tests than before because of some code we broke” sense. This actually may have to do with our testing framework script not actually loading all of the tests properly initially. We fixed this mid-stream. That said, we are looking into the root cause of this regression, just in case we broke something.

-

Why the 90 degree cliff drop-off around November 24th? We submitted a diff that we thought was going to push a framework to 100%, but instead segfaulted the runtime. It was fixed soon thereafter.

Testing Framework

The 21 open source projects that were used as our initial baseline to increase our unit test parity were, as stated above, chosen on popularity. However, they were also chosen based on their use of PHPUnit and on how well they would work with our testing framework. Given the sheer number of unit tests that come with some of these projects (e.g., Symfony and ZF2 each have over 10K tests), we needed to come up with a testing framework that would allow us to run the tests for all of the projects in a speedy manner. So the team developed a script that would download, install and run the unit tests for all of the 21 open source projects in parallel. The script is still a work in progress, but it allowed us to run all 50K+ unit tests in 30 minutes to 1 hour (depending if the JIT is on) vs the many hours it would take to the unit tests serially. That script is located in the HHVM Github repository.

You may also notice that your favorite open source project is not currently in the list above. There are a couple of reasons for that. First, we couldn’t cover all open source projects in a 3-week span. Secondly, while open source projects like CakePHP are very important, and we will add them to our testing framework, there were some setup and configuration problems (e.g., requiring of a database) that wouldn’t allow us to add them in a short manner.

Finally, as discussed in our pre-lockdown blog post, the HHVM team created a bunch of “stickies” to help guide our work. The stickies were placed on a “number of failing tests associated with this sticky task” vs. “number of days to implement”. So, we generally worked from top left to bottom right to get the most bang for our buck.

Assumptions and Caveats

It is worth pointing out some of the assumptions and caveats that went into to coming up with the statistics above.

-

The final, overall unit test pass percentage (98.5%) is a straight, unweighted average of the individual open source project unit test pass percentages (i.e., add all of the percentages and divide by 21). So, a project like Paris which has 50 unit tests carries the same percentage weight as Symfony which has 10K unit tests. If we weighted these percentages (where Symfony and Zf2 carried more weight than Paris and Idiorm), the overall pass percentage would see a minimal drop (minimal because of the small variance in pass percentages for all of the open source projects).

-

There are some unit tests that fail in HHVM, but also fail with PHP 5.5.x. We called these tests “clowny” and disregarded them in our testing framework.

- There are some unit tests that actually caused problems with our testing framework script (deadlocks, etc.). We “blacklisted” these tests and counted them as failures in terms of our pass percentage.

Submitted Project Pull Requests

There were times when code changes were necessary to the actual open source project in order to successfully run all of its unit tests. For example, a change may have been necessary in order to support our parallel testing framework. In other cases, there were actual bugs in the unit tests. A real pleasant surprise during our lockdown was how awesome the project owners/maintainers were in reviewing and, in most cases, accepting our pull requests.

Travis Integration

The announcement of HHVM 2.3 talked a bit about Travis CI Integration. Now that we are starting to consistently pass unit tests for open source projects, some have added HHVM to their Travis CI build (awesome!). Here are some open source frameworks that have added us to their CI build:

Thanks to the above for adding us so quickly. And we have many pull requests outstanding for other open source frameworks to do the same.

Performance

The performance team blew through their lockdown performance goal of 15% and ended up at 16% for running facebook.com.

This can only mean good things for other PHP code out in the wild. The performance gains came from lots of small improvements, and a few big ones. Some of the biggest wins were:

-

Generating code for unusual function call patterns. HHVM already generates good code for ordinary function and method calls, so some less-common patterns had started to show up in our performance profile data. The two biggest ones were

call_user_func()andstatic::method calls, which were both handled by the interpreter. Now we generate optimized machine code for each of those call types. -

Optimizing the layout of the HHVM binary. HHVM is a big program: the compiled C++ code of HHVM clocks in at just under 100 MB. The CPU’s caches work better when the hot code footprint is small, instead of spread out across the address space, so we put the hottest functions together in one part of the binary. For an extra boost to I-TLB performance, we then mapped that part using huge pages.

-

Using the interpreter to detect hot functions. HHVM interprets the first several requests to avoid wasting time and space compiling startup code. We added logic to the interpreter to find the hottest functions and compile them to a special part of the translation cache. Like the last optimization, this one improves code locality, this time for the dynamically generated code.

-

Upgrading our regular expression library. Newer versions of PCRE include a JIT compiler for regular expressions, which gives HHVM a nice performance boost.

Hygiene

I am sure you are thinking to yourself, “all these gains and statistics are nice and everything, but what about the beards?!?” Right, you then remember that in our beginning the lockdown post that “shaving was discouraged”. I am happy to report that we also succeeded in maintaining a state of minimal facial hygiene throughout the lockdown. And here are the pictures to prove it.

Did we meet our goals?

Remember the three success vectors that were posed at the beginning of this blog post?

-

Did we meet our parity improvement goals for the 21 open-source frameworks we tested against => 99% average unit test parity?

-

Did we meet performance improvement goals => 15% across the board performance gain?

-

Were the razors put away for the entirety of the three weeks?

Here are your answers:

-

Close YES! (using rounding to our advantage)

-

Resounding YES! (no rounding needed)

-

YES! Some of us are grayer than others.

The Future (2014)

Performance improvements will always be a core mantra for the HHVM team. That said, parity will be 1A on the priority scale for the team. We really do care about open source and the PHP community. 2014 will be a year where we go to great lengths to make HHVM first-class when it comes to both performance and parity. More unit tests passing for more open source projects and frameworks. Real world testing for PHP code out in the wild. Stay tuned for further updates on our progress and plans. Until then, Happy Holidays and Happy New Year!

Comments

- Yermo: May I suggest, sometime during the 2014 push, putting some efforts into improving documentation? From a users/developers point of view that's the largest drawback right now and I suspect is keeping quite a few people from trying the platform. (It took me many many hours of trial and error to get the platform running my site.) If more accessible and organized documentation were available, it would go a very long way to getting additional sites using the platform and would draw more help into the project. I would suggest the same for development docs. While the code is very well organized it is largely devoid of comments and there's little in terms of overview documentation making it quite time consuming for someone to come in and contribute meaningfully to the project. It would also pay dividends by freeing the developers from having to answer so many repeat questions. :)

- juicybacon: One question. Did the tests fail because some functions are not coded/broken or just general runtime borkyness?

- Joel Marcey: That is a high priority item for the HHVM team in 2014 and, actually, my personal #1 (or at least #1A) priority item. I will be diving neck deep into documentation come January.

- Nick: Very impressed with how much effort is being put into this. MUCH better than HipHop. Keep up the good work!

- Yermo: Excellent!

- Paul Tarjan: Mostly some functions haven't been written yet, or don't behave the same way in corner cases.

- asad hasan: Great Job!!! keep up the good work guys.

- Marco Pivetta: I'm starting to introduce HHVM in all my build matrixes - works awesome except for edge cases! Keep up with the good work!

- Riku: Very interested in this, keep up the good work guys!

- dandy: This is good. but where is Wordpress?

- Louis: I expect WordPress already has 100% or nearly so. When the HHVM blog first started, they gave plenty of instructions on how to get Wordpress running on HHVM. In addition, they use Wordpress to power the blog and they also use HHVM for the site. So... Wordpress is kind of a given ;-)

- Jeremy Wilson: I'd like to see Redis and PostgreSQL support.

- Brandon DuRette: WordPress is functional on HHVM, but the test suite is not at 100%. However, that's only part of the story. Plugins and themes in the WordPress ecosystem also depend on PHP parity. The testing matrix for the entire WP ecosystem blows up pretty quickly.

- Joel Marcey: Yeah, we know Wordpress is quite important and quite popular. :) The reason it did not make the cut for this round was the more complicated configuration. The testing requires an extra config file + database setup and access. We tried to stick with popular frameworks that ran phpunit tests "straight out of the box" so to speak, with minimal configuration changes. Our test runner that we created and used didn't handle that case... but we will get it there. Mediawiki initially had this problem, but then they showed us an --exclude-group=Database option that we used. Btw, I am getting the unit tests for Wordpress from here: https://github.com/kurtpayne/wordpress-unit-tests which seemed to be the best mirror I could find on Github.

- Paul M. Jones: I'd like to get the Aura components ( https://github.com/auraphp/ ) included in this suite. How does one do so? Many thanks to everyone involved here.

- Simon: Redis support is already available and there's a PostgreSQL</a> extension you can use.

- Nitin: You guys did a awesome Job !! I would suggest you to work on its documentation, This is the only thing HHVM needs. You Rock!

- Paul Tarjan: Send us a pull request for the test script we linked to.

- Joel Marcey: Documentation is high priority for the team in 2014, starting in January :-)

- wwbmmm: 赞!

- Randall Helzerman: Congrats on meeting your goals! We don't do PHP, but we do have some big C++ programs; are there any generic tools optimizing the cache profile of your executable? How exactly did you guys do that? Sounds like an interesting story, if its not a secret of the guild.

- Aris Setyawan: Hi, So a PHP framework which doesn't have PHPUnit base unit-testing, can't join HHVM parity test?

- Facebooks PHP-Compiler HipHop 2.3 schneller und kompatibler | Klaus Ahrens: News, Tipps, Tricks und Fotos: […] 2.3 hat HHVM jetzt in Sachen Kompatibilität einen weiteren großen Schritt nach vorn gemacht und absolviert rund 98,58 Prozent aller Unit-Tests von 21 verschiedenen PHP-Projekten. Immer mehr der Open-Source-Projekte unterstützt HHVM zu 100 […]

- Joeri: Really nice to see hhvm improving. As someone who has been programming php professionally for almost a decade it's great to see all the refreshing new ideas going on now in the php landscape. Are there plans to support oci8? I'm curious to see how hhvm handles our AutoCAD to SVG conversion code, but it's tied pretty deep to our oracle DB. I would also be interested to take our whole app for a spin, but it's 500 kloc of oracle-based code so again i can't do anything useful until there is oci8 support.

- CeBe: Great news! Once travis-ci has fixed some issues with PHPUnit I am going to run yii</a> and yii2</a> against hhvm again and will report all things that are stopping us from having 100% test passing! :-)

- Paul Tarjan: When I cut 2.3.2 I'll include the fix for phpunit

- Bert Maher: Yes! We actually built a pretty nice toolchain for optimizing our binary layout, which we will (hopefully) write up here pretty soon. I do have some hope that it will apply to other big executables besides HHVM.

- Kevin: Running Drupal http://translate.google.com/translate?sl=auto&tl=en&js=n&prev=_t&hl=en&ie=UTF-8&u=http%3A%2F%2Fweb-rocker.de%2F2013%2F12%2Fdrupal-7-hhvm-vs-php-55-zend-opcache

- Darren L: This seems pretty damn awesome and fun!

- Yii-Framework unter HHVM › isFett.com: […] Laut http://www.hhvm.com/blog/2813/we-are-the-98-5-and-the-16 vom 19.12.2013 werden 99,11% aller Unit-Tests mit dem Yii-Framework fehlerfrei abgeschlossen. Ein Grund mehr sich hier die Performance-Unterschiede anzusehen. Dafür erstellen wir ein kleines Test-Projekt. […]

- Magento-Neuigkeiten der Wochen 51/52 2013: […] nicht nur weiter an der Performance und Kompatibilität geschraubt, es wurde auch Magento 2 in die Liste der getesteten Software aufgenommen. Außerdem kann HHVM nun auch als FastCGI-Modul hinter einem herkömmlichen Webserver […]

- Grim: How about symfony2? I try use hhvm fastcgi mode ,but it's not ok. How use symfony2 on hhvm?

- Sascha: Can you clarify what tests you were running with Drupal and which version? I currently have two failing PHPUnit tests, created an issue on our side to improve/track HHVM compatibility: https://drupal.org/node/2165377

- HHVM HipHop Virtual Machine vs Apache2 |: […] Warum also nicht sofort komplett auf HHVM umstellen? Die Frage ist leicht beantwortet, da unter HHVM noch nicht alle Module und Plattformen kompatibel sind. Eine aktuelle Übersicht findet ihr hier. […]

- Ajinkya: PHP is quite underrated, mostly from Java guys, but look at numbers, its the king.

- qianzhihe: Great news! thank you

- How will HHVM influence PHP? « {5} Setfive – Talking to the World: […] the last few weeks, there’s been a slew of HHVM related news from the “We are the 98.5%” post from the HHVM team to the Our HHVM Roadmap from the Doctrine team. With the increasing […]

- Christopher Svanefalk: Fantastic work guys! My company is already optimising our PHP codebase for full HHVM compatibility, and we plan to deploy it on our production servers before summer 2014. You are doing great things for PHP and the community, and I very much look forward to see how HHVM will progress during the year :)

- ming: Great stuffs. BTW, your tests include phpmyadmin, but, I get only blank screen using the latest build running as fastcgi daemon? Is there special configuration?

- Paul Tarjan: There are fixed in trunk and not in the release that should make it work. Can you try the `hhvm-nightly` package and see if that works for you?

- Ming: Thanks Paul. The nightly build works and seems mostly functional. There is a segmentation fault and I will file that to the bugs forum. Another question. I understand that HHVM does not implement every modules and/or drivers that PHP offers, how do I know what is / is not included, and, is there any tools to check against the webapp codes?

- Paul Tarjan: function_exists() is a nice to know, and if you like to trust docs you can try https://github.com/facebook/hhvm/wiki/Extensions

- Facebook HHVM 团队封闭开发三周的成果展 | 我爱互联网: […] 英文原文:we-are-the-98-5-and-the-16 […]

- HHVM: The Next Six Months « HipHop Virtual Machine: […] We’ve been making steady progress on HHVM’s compatibility with PHP in the wild, but we still have a lot of work ahead of us. We’re using unit test pass rates as a proxy for success measurement, but you can help by adding HHVM to your Travis configuration, and reporting bugs and issues through GitHub. We are resourced to help support a couple of major HHVM deployments, which we hope has the side effect of exposing us to “non-Facebook” deployment and maintenance challenges. […]

- Implementing MySQLi « HipHop Virtual Machine: […] After warming up with the parser, I was ready to start my big project: implement MySQLi. This has been a long requested feature for HHVM. And, this extension is required to help meet our compatibility goals. […]

- Tracking Parity « HipHop Virtual Machine: […] runner are a nice representative sample of real world PHP (and we are adding more). We showed a static snapshot of our test parity to these frameworks late last year. As HHVM is continually developed, it is […]

- 赵伊凡’s blog:Facebook推出编程语言——Hack | 赵伊凡's Blog: […] HHVM仍然是一个PHP运行平台,我们打算继续保持这种方式。事实上,我们正努力达到PHP-5的标准。其中HHVM的首要任务是能够运行未经修改的PHP 5的源代码,不仅是为了社区,也因为我们依赖许多第三方PHP底层库。 HHVM现在是一个能同时运行PHP和Hack的平台,所以你可以逐渐体验Hack所带来的新特性。 […]

- Adeel Ahmad: Although you have made progress with PHP compatibility, its a hard hitting fact that still you have to go a long way. I am using HHVM and still it requires much to be done.

- PHP 6: Zend Engine oder HHVM? - entwickler.de: […] ohne Unterlass daran, die Abdeckung der wichtigsten Frameworks und Tools weiter zu steigern – zahlreiche Projekte sind bereits zu 100 Prozent mit der HHVM kompatibel. Doch auch die Projekte selbst unternehmen große Anstrengungen, ihre Codebasis für HipHop zu […]

- HHVM in der echten PHP-Welt - entwickler.de: […] runner are a nice representative sample of real world PHP (and we are adding more). We showed a static snapshot of our test parity to these frameworks late last year. As HHVM is continually developed, it is […]

- Si desarrollas en PHP y tienes un rato, prueba HHVMSopa de bits: […] el pasado 19 de Diciembre se publicaba en el blog de HHVM un artículo sobre la ejecución de pruebas unitarias de diversos frameworks en HHVM. Desde el inicio de esas pruebas hasta el resultado final, la mejora ha sido notable en algunos […]

- HHVM en Magento y SymfonySopa de bits: […] más estrellas en GitHub, es otro de los agraciados con la mejora de rendimiento. Además de las mejoras publicadas en los tests del blog de HHVM, hay otras pruebas más básicas realizadas con ApacheBench. Digna de mención es esta […]